Whoa!

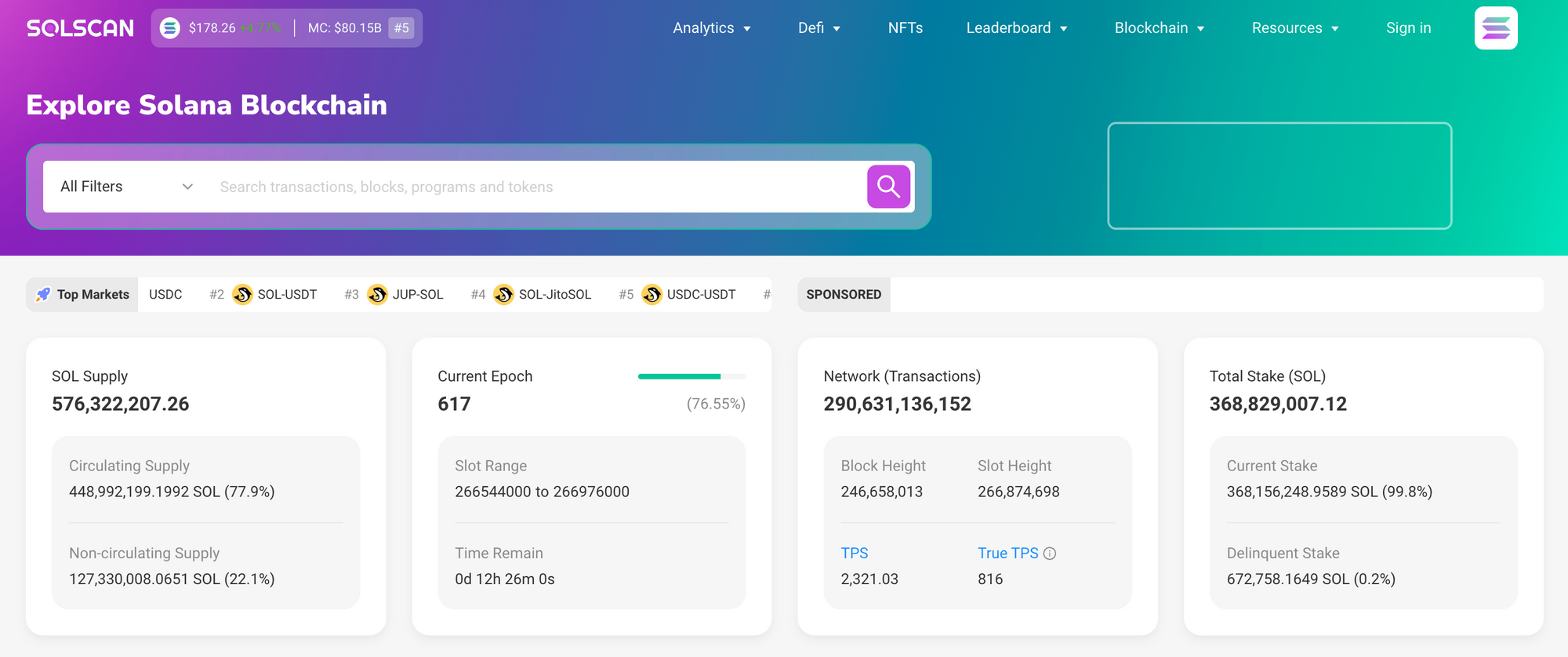

Okay, so check this out—Solana tooling has matured fast. My first impression was pure excitement. The speed felt unreal and a little scary. Initially I thought on-chain tools would be clunky, but then realized they were actually usable and even pleasant to navigate once you got used to the layout and terminology.

Seriously?

Yes — seriously. I dove into transaction tracing last month because somethin’ about a token mint seemed off. My instinct said something smelled like a bad bot pattern. I followed a trail of small transfers and memos, and that tiny hunt taught me more than a week of dashboard-watching ever did. On one hand it felt like detective work, though actually it was more like learning a language you already half-knew.

Whoa!

There are three practical layers I always check. First: the raw transaction log. Medium-level tools sometimes hide too much. A good explorer shows signatures, timestamps, fee breakdowns, and inner instructions without forcing you to stitch them together manually. Second: analytics dashboards. Those help you spot trends across programs and wallets quickly. Third: NFT trackers. They link token activity to metadata, collections, and marketplaces in ways that actually make sense.

Hmm…

At first I prioritized speed over clarity. Then I realized clarity mattered for making decisions. Actually, wait—let me rephrase that: fast data with poor context is worse than slightly slower data with deep context. That shift changed how I used explorers every day. I started setting up custom searches and alerts for specific program IDs and mint addresses, and the result was fewer surprises and better timing on trades.

Whoa!

Solana explorers vary widely in their UX assumptions. Some are minimal and they call that elegance. Others pile charts and filters on every screen. I’m biased toward tools that let me drill down quickly. This part bugs me: a lot of sites pretend to be all-in-one but still make it hard to find the original transaction payload. I’m not 100% sure why that persists, but my theory is legacy UI decisions and pressure to add flashy metrics.

Really?

Yes, really. The best explorers provide exportable CSVs, copyable raw JSON, and linkable views you can share with a teammate. These features matter when you’re auditing contracts or defending a position in community channels. I use them when filing bug reports and when tracking marketplace royalties; those exports are lifesavers. Not all explorers let you deep-link to a specific instruction index inside a transaction though, which is maddening when you’re troubleshooting a program call failure.

Whoa!

Here’s the thing. On-chain analytics are only as useful as the labels and indices behind them. A chart that shows “token transfers” without distinguishing wraps, unwraps, and program-invoked moves can mislead you. Initially I assumed token movement counts were straightforward, but then realized many transfers are meta-operations triggered by smart contract logic. Understanding inner instructions prevents you from drawing wrong conclusions about whale activity or wash trading.

Hmm…

I often cross-check an explorer’s view with on-chain raw data. That redundancy saves me from common misreads. For example, a spike in “unique holders” might actually be a single marketplace sweeping tiny accounts. When you inspect the transaction details, those telltale patterns show up: repeated CPI calls or consistent memos in the same format. Observing these patterns taught me to read intent, not just numbers.

Whoa!

Now, about NFTs — they are a mixed bag. On Solana, metadata standards are helpful but not universal. Many projects use custom fields and off-chain hosting that can break display logic. I once tracked a collection where the metadata host went down for hours and marketplaces showed blank images; it was chaotic. Tools that cache metadata and show IPFS status reduce false alarms and help collectors and sellers react faster.

Really?

Yep. Also, lookups by mint address are essential. A strong NFT tracker links mint to creators, recent sales, royalty splits, rarity metrics, and linked marketplace listings. When a valuation decision rides on one attribute, having that full context is invaluable. I built a quick watchlist that alerts me when royalties deviate from expected rates, and it caught a misconfiguration before it turned into a public incident.

Whoa!

Analytics platforms add another layer: program-level telemetry. Seeing which program IDs attract the most unique signers, which instructions cost the most in compute units, and which accounts are hot can inform strategy. For dev teams, that telemetry guides optimization and helps forecast fee pressure. For users, it hints at liquidity flow and potential front-running risks.

Hmm…

On one project I helped with, we found a recurring instruction that added latency, and trimming it saved gas and cut average confirmation time. On the other hand, deep optimizations sometimes introduce complexity that makes audits harder. So there’s a trade-off between micro-optimizing for cost and retaining readable, auditable flows.

Whoa!

Okay — practical tips I use daily: set alerts for program ID activity, monitor failed transactions on wallets you care about, and snapshot owner lists for collections before major drops. Use CSV exports for offline correlation. Share a link to the exact instruction index when asking for help. Those small habits save time and prevent miscommunication.

Really?

Absolutely. I recommend experimenting with multiple explorers to see how they differ. Some offer better mempool visibility, others better historical analytics. If you’re new, try tracing a simple transfer to see how inner instructions are presented. You’ll spot differences immediately and you’ll learn which tool matches your workflow.

Where I go first

I often start with a familiar, reliable explorer to get the raw transaction and signature context, and then move to analytics pages for trend signals. For day-to-day tracking and occasional deep dives I usually return to solutions that let me share specific instruction views and export raw JSON. If you want a solid starting point, check the solscan explorer official site — it balances transaction detail and user-friendly charts well, and their NFT views are decent for quick verifications.

Whoa!

I’m not 100% impartial here; I prefer explorers that respect power users while staying usable for newcomers. That balance is rare. A bad explorer either talks down to you or overwhelms you with low-value metrics. The good ones give you both the summary and the forensic tools without making you hunt for them.

FAQ

How do I verify a suspicious NFT transfer?

Check the transaction signature and inner instructions for CPIs, examine the mint and creator addresses, and confirm metadata availability via IPFS or cached sources. If the transfer involves multiple tiny accounts or repetitive memo patterns, consider it possibly automated. Export the transaction JSON if you need to share the evidence — plain screens are easy to misinterpret.

Which metrics should I trust for analytics?

Trust metrics that are transparent about methodology. Prefer unique signer counts over raw transfer counts when assessing user growth. Watch compute unit consumption and fee spikes for operational concerns. If a metric lacks drill-down capability, treat it skeptically — aggregated numbers without context can mislead.

No comment yet, add your voice below!